Description

Our Transforming Transformation project seeks to explore new approaches to sound synthesis and transformation within immersive environments.

The first phase of this is ‘Transforming Transformation: 3D Models for Interactive Sound Design’, an AHRC-funded project in collaboration with Glasgow School of Art’s Digital Design Studios and Two Big Ears.

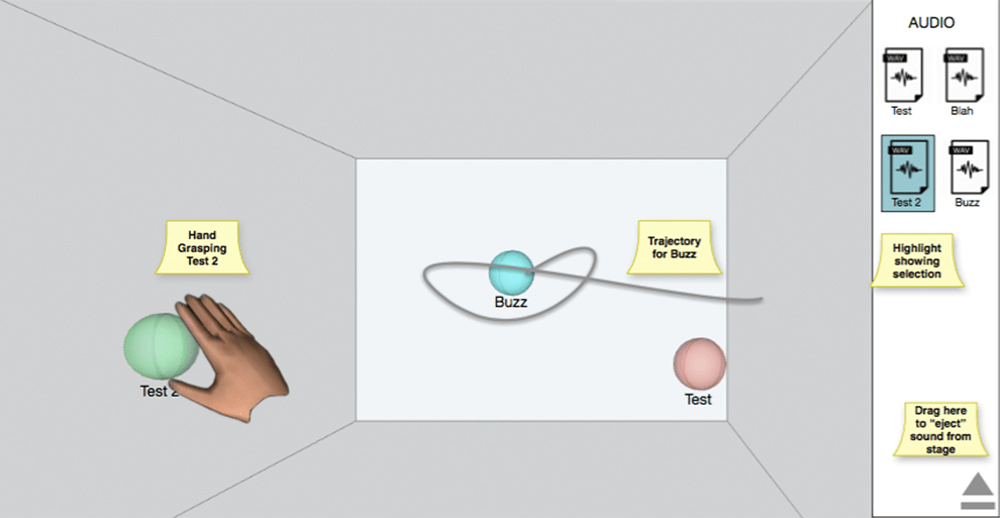

The project opens up a new field of music technology research by exploring human-centred approaches to creative sound design. The project will involve the development of a proof-of-concept system, which enables sound to be manipulated through hand movements as if it were an invisible 3D object. The interaction will be enhanced by real-time visualisation within a virtual 3D space. The research surfaces the notion of a ‘natural’ interface for sound design taking account of already learned behaviour and innate skills such as motor memory, gesture and spatial awareness. The aim is to enable musicians and sound designers to ‘think in sound’ when working with technology, catalysing a shift from technology-centric to human-centric models.

As a pilot project, we will concentrate on one specific audio processing technique: sound spatialisation, with a view to later amplifying our research findings in a larger project, addressing a wider range of sound design practices. In simple terms: our system will explore the idea that we can make users feel as if they are directly positioning sound in space by ‘picking it up and moving it’ with their hands, with the aim of serving as an exemplar for future, more detailed studies.

Sound design entails the manipulation of sound for dramatic, realistic, musical, or emotional effect. Typically, audio is processed using techniques such as filtering, time-stretching, pitch shifting, granulation, attenuation and panning. These techniques are exposed to the sound designer through discrete controls such as ‘sliders’ and ‘dials’ each of which controls a single parameter in the underlying signal processing system. By contrast, human concepts of sound are characterised by polymorphous, perceptual, metaphorical, symbolic and onomatopoeic associations. Many of these associations are cross-sensory, for example: ‘warm’, ‘bright’, ‘distant’, ‘metallic’. Furthermore, they suggest physicality and invite the possibility of direct quasi-tactile manipulation. This is especially true of spatialisation, where there exists a sense of sound localisation within an acoustic space, further evoking the idea of tangibility: ‘moving’ or ‘placing’ a sound, or even ‘pushing’ a sound to provide inertia in a given direction.

Our research therefore challenges existing sound design models and explores the notion of a ‘natural’ and direct corporeal link between imagined sound and acoustic results. In order to achieve this, we will draw upon the immense benefits offered by the PI’s research lab within a leading music Conservatoire. Being based within a Conservatoire environment, provides the project with immediate and unfettered access to high-quality musicians from a range of backgrounds. This serves to ground the research firmly in artistic practice, allowing artistic ideas to both test extant models and serve as the basis for new ones. Formal user experience reviews will be undertaken at milestone stages in the project allowing us to surface users’ tacit views and opinions on their experience when using the developed system.

The research method will also entail the commissioning of a new musical work utilising the system, to be premiered in a public workshop and concert in the final month of the project. This will serve as both an opportunity for testing in a ‘real world’ creative scenario and a means of disseminating the projects outputs in a public forum. There will also be a mid-project ‘experimental jam’ using the in-development system, which will be broadcast live on the internet.

Funding

Related Posts

Transforming Transformation: “conclusions and future work”

Our AHRC Digital Transformations project "Transforming Transformation" officially finished last week, so I thought I'd share a few thoughts about how the project went and [...]

MiXD website now online

As part of the final phase of our AHRC Digital Transformations we're organising a national symposium on Music Interaction Design (MiXD). At the symposium we [...]

Glasgow School of Art showreel

Great to see our AHRC Digital Transformations project featured in our project partner Glasgow School of Art's digital showreel (at 23 seconds in).

MiXD videos now available

https://www.youtube.com/watch?v=Xq-_CChlufc&list=PL7E6VwpzpQbn7-ueF8wFBN9Idnf-P8nF_ The videos from our MiXD symposium are now available via the following link. The symposium was huge success and featured a presentation of [...]

ICLI Paper accepted

Our paper titled 'Approaches to Visualising the Spatial Position of Sound-objects' has been accepted for publication as a paper and demo at the International Conference [...]

esthesis: performance at MiXD

https://www.youtube.com/watch?v=dBRzhuQ3W5w As part of our AHRC Digital Transformations project "Transforming Transformation" we commissioned a new artistic work to explore the concept of transforming sound through [...]

EVA paper accepted

Our paper titled 'Approaches to Visualising the Spatial Position of Sound-objects' has been accepted for publication as a paper and demo at EVA London 2016. [...]

Keynote confirmed for MiXD symposium

In order to greatly extend the final workshop of our AHRC Digital Transformations project we've decided to host a national symposium on Music Interaction Design [...]

Transforming Transformation Experiment 4

https://vimeo.com/album/3830936/video/152990816 This is the fourth experiment in a 1-year AHRC Digital Transformations project run jointly between Birmingham Conservatoire’s Integra Lab and Glasgow School of [...]

Adding vibrotactile feedback

One of the findings from our first round of tests for the system we have developed for our AHRC Digital Transformations project "Transforming Transformation" is [...]

Artistic commission

We're pleased to announce that composer and sound artist James Dooley has accepted the artistic commission for our AHRC-funded Transforming Transformation project (working title "Integra [...]

Initial test results

We have now completed the first round of testing on the system we've been developing for our AHRC Digital Transformations "Transforming Transformation" project. Full results [...]

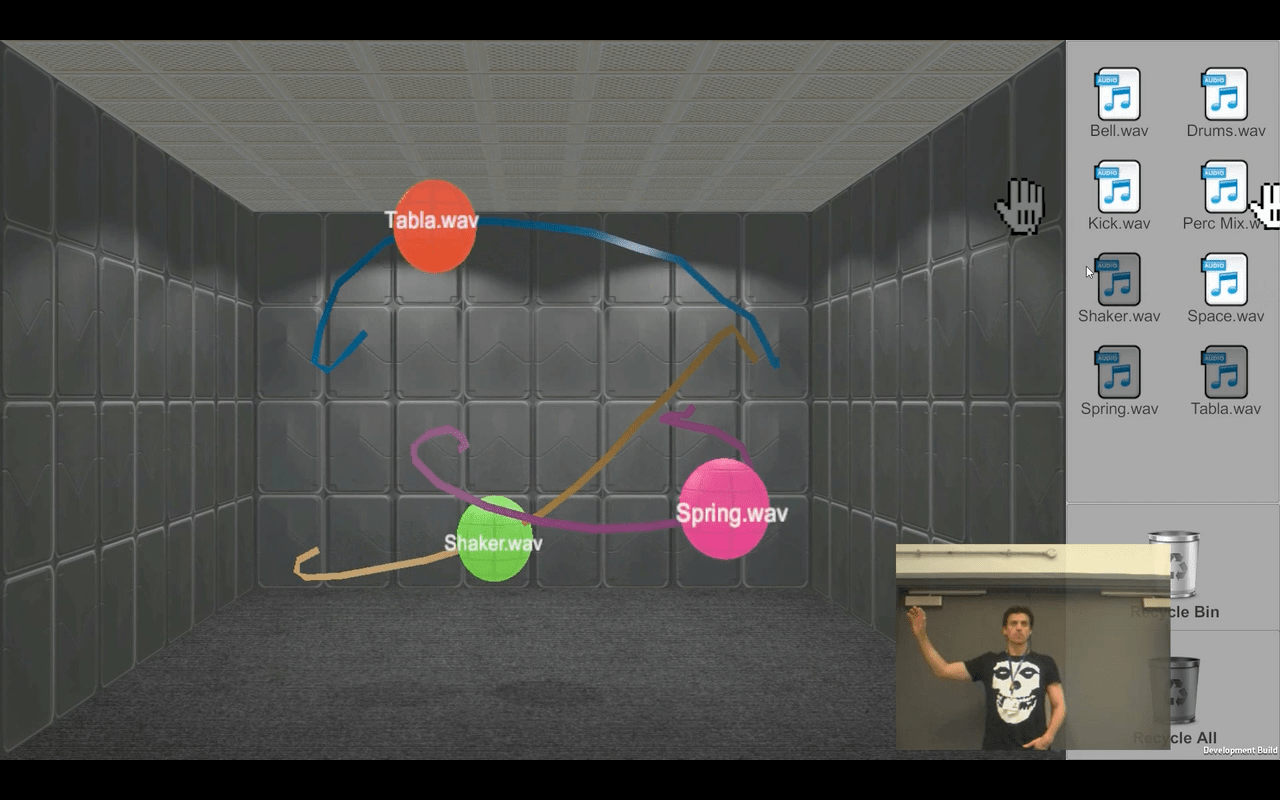

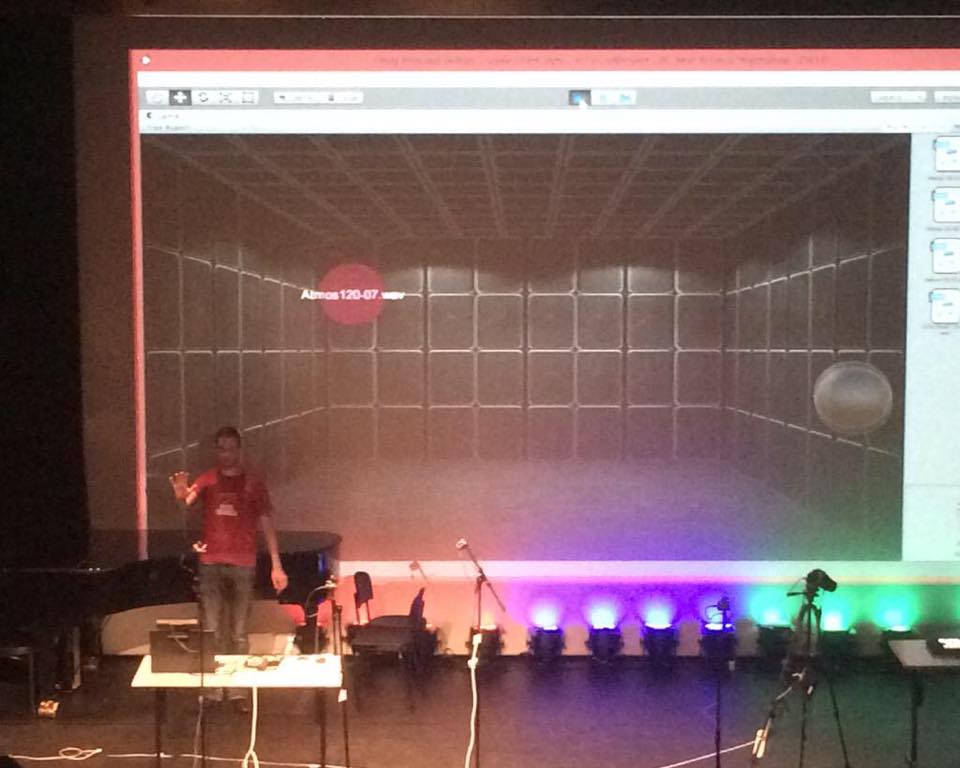

Transforming Transformation at Music Tech Fest

Today I demo'd the first prototype of our 3D Sound Transformation system at Music Tech Fest Central in Ljubljana. The system-in-progress, has been given the [...]

Transforming Transformation experiment 3

https://vimeo.com/139458528 This is the third experiment in a 1-year AHRC Digital Transformations project run jointly between Birmingham Conservatoire’s Integra Lab and Glasgow School of [...]

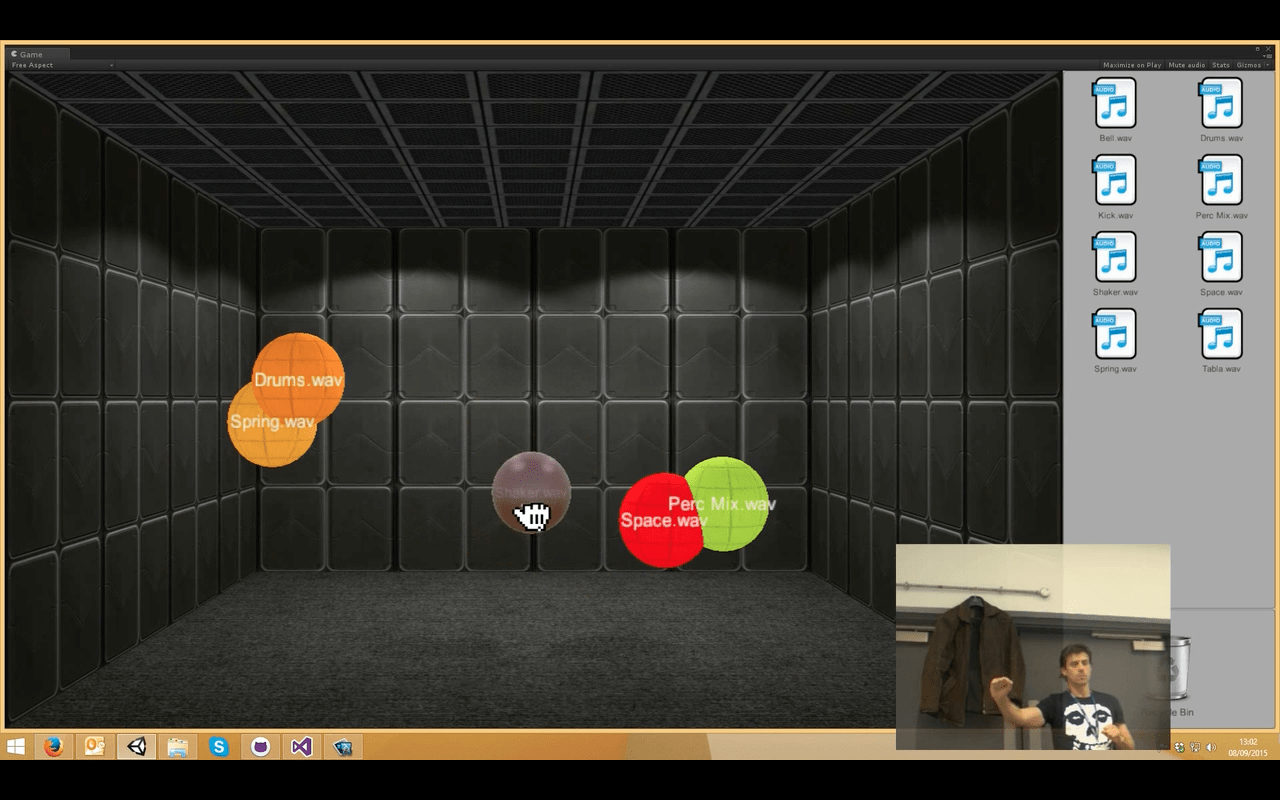

Transforming Transformation experiment 2

https://vimeo.com/138194406 This is the second experiment in a 1 year AHRC Digital Transformations project run jointly between Birmingham Conservatoire’s Integra Lab and Glasgow School [...]

Announcing the MiXD symposium

As part of our AHRC Digital Transformations project we were planning to present our final system (working title "Integra Forms") through a national public workshop [...]

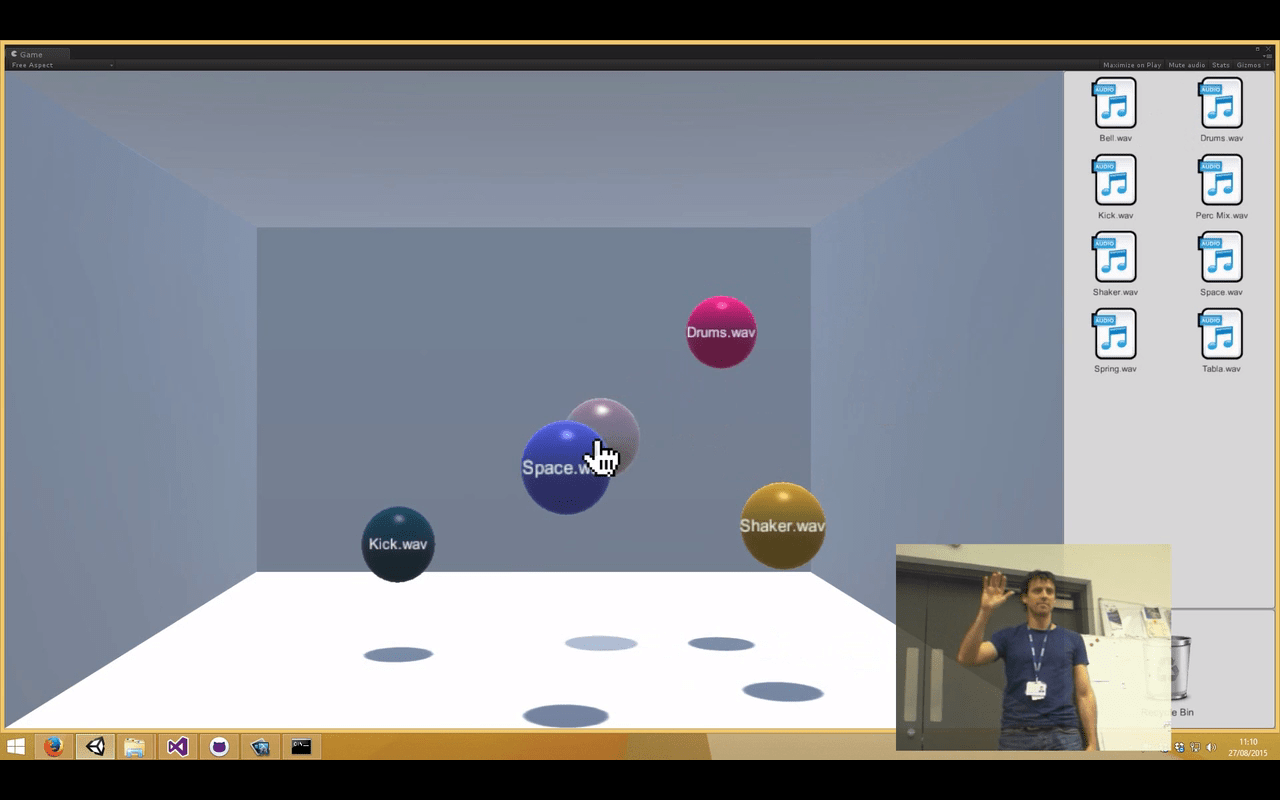

Transforming Transformation experiment 1

https://vimeo.com/131588660 This is the initial experiment in a 1 year AHRC Digital Transformations project run jointly between Birmingham Conservatoire’s Integra Lab and Glasgow School [...]

Designing a human-centred system for sound transformation

At its core our AHRC Digital Transformations project "Transforming Transformation" has a simple aim: to instigate a new way of transforming sound that radically improves [...]

Fully articulated hand tracking with Kinect 2

This video from MicroSoft Research shows some of the hand-tracking capabilities of the Kinect. Shame this functionality isn't yet in the public SDK as it [...]

Choosing technologies and frameworks

Choosing technologies and frameworks is an important early stage of any digital research project. Basing a project on the wrong tech can cost time and [...]

AHRC Digital Transformations project begins

We are delighted to announce the beginning of our AHRC-funded Digital Transformations project, titled "Transforming Transformation: 3D Models for Interactive Sound Design". This will be [...]