Our AHRC Digital Transformations project “Transforming Transformation” officially finished last week, so I thought I’d share a few thoughts about how the project went and what our future directions might be.

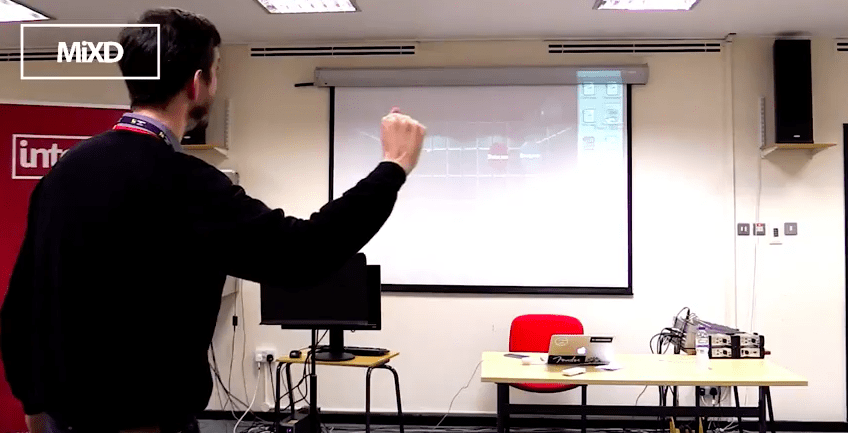

The aim of the project was to initiate a new human-centred approach to sound transformation that departs from existing approaches by allowing musicians and sound designers to manipulate sound directly as though it were an invisible 3D object. To achieve this we designed a system that enabled sound sources to be “touched”, “grabbed”, “dragged”, and “placed” within a 3D virtual environment where a sound source’s location controls its corresponding spatial position within a virtual acoustic space. In simple terms, if a sound designer wants a sound to appear to come from behind the listener, they just “pick it up” and “move it to the back” of the virtual space.

Furthermore the system allows spatial trajectories to be “drawn” in the space by “finger tracing” a line; sources placed on the line will be have their spatial position animated along it. This could be used to simulate the sound of a vehicle moving past the listener for example. Finally, the relative level of each sound can be changed through a “louder / quieter” in-air index finger gesture.

Results

Our intention was to take a very simple and obvious mapping between human movement and sound transformation and test it with a representative group of target users. Our results have now been published in a number of peer-reviewed papers and we will continue to publish as new findings emerge. We have also previously posted on some of the initial findings. In summary our test participants found our Transforming Transformation system (working title “Integra Forms”) engaging and novel compared to existing systems. It was also clearer to users how to perform a range of tasks. However our research identified a number of problems:

- Users had significant difficulty grasping sounds within the 3D environment because they were not able to accurate match object boundaries to their physical hand position

- Ability to visualise the interaction and sense of immersiveness was diminished due to the size and distance of the computer screen. This distance was prescribed by the working distance of the Microsoft Kinect of >1.4m

- Significant difficulties were had in tracking hand position and grasp in dark environments. See this demo, for example.

- Difficulties were experienced when the and was not facing “palm forwards” towards the Kinect. If the palm was angled past 45 degrees to the horizontal grasp could not be detected reliably

Future Work

The project has already begun to address the problem of “grasp” by adding haptic feedback to the system. Our initial tests with this have been very promising and have dramatically improved the experience and accuracy of grasping sound-objects. In short, the haptics allow us to “feel” sounds as we grab and manipulate them.

The project has been challenging and immensely rewarding, but a lot of has been achieved in a short time span. I believe we have devised a highly novel system, which demonstrates the potential we have for transforming sound transformation using 3D interaction. Exploring this concept through an artistic commission has led to a radically new approach to performance and creative practice.

We feel that it is our role now to take this potential and greatly expand it. In this project we have started with the most basic and simple interactions for controlling the spatial position of sounds, their loudness and trajectory. There are vastly more possibilities for sound transformation in the palette of sound designers and music producers, and our next challenge is to take these possibilities and express them in a way that is aligned with human imagination. So that sound can not only be grasped and moved, but stretched, expanded, sculpted, shaped and formed through the infinite nuances of human movement.