Earlier this year, Integra Lab hosted a showcase performance in the Royal Birmingham Conservatoire’s contemporary performance space, the Lab. The event featured works from our cohort of researchers, with an exciting mix electroacoustic and audiovisual systems being utilised in performance. The event is summarised below in four short pieces by Edmund Hunt, Matthew Evans and Joe Wright.

Integra Pipes

The Northumbrian Smallpipes are a small, bellows operated bagpipe used in the traditional music of Northern England. Compared to other bagpipes, it is a relatively complex instrument. A system of keys allows chromaticism over a range of about two octaves. Five drones can be turned on or off, and can be set to a number of different pitches.

This performance used two traditional tunes from the north east of England: ‘The Wild Hills of Wannies’ (made famous by the twentieth-century piper Billy Pigg) and a tune from the eighteenth-century Vickers manuscript called ‘The Cow’s Courant’ or ‘Gallop and Shite’. During the performance, Integra Live (operated by foot pedals) triggered various processes. High upper partials were extracted and transformed into slowly changing chords. By the end of the piece, the combination of granular and spectral delays, chords and transposed material was intended to engulf the live performance, creating the impression that Integra Live had taken over.

As a composer, I rarely experience Integra Live software from a performer’s perspective. This piece was an opportunity for me to try out some of the ideas that will be included in my new work for string quartet, Integra Live electronics and dance (to be premiered later in 2019).

EDMUND HUNT

SKIN

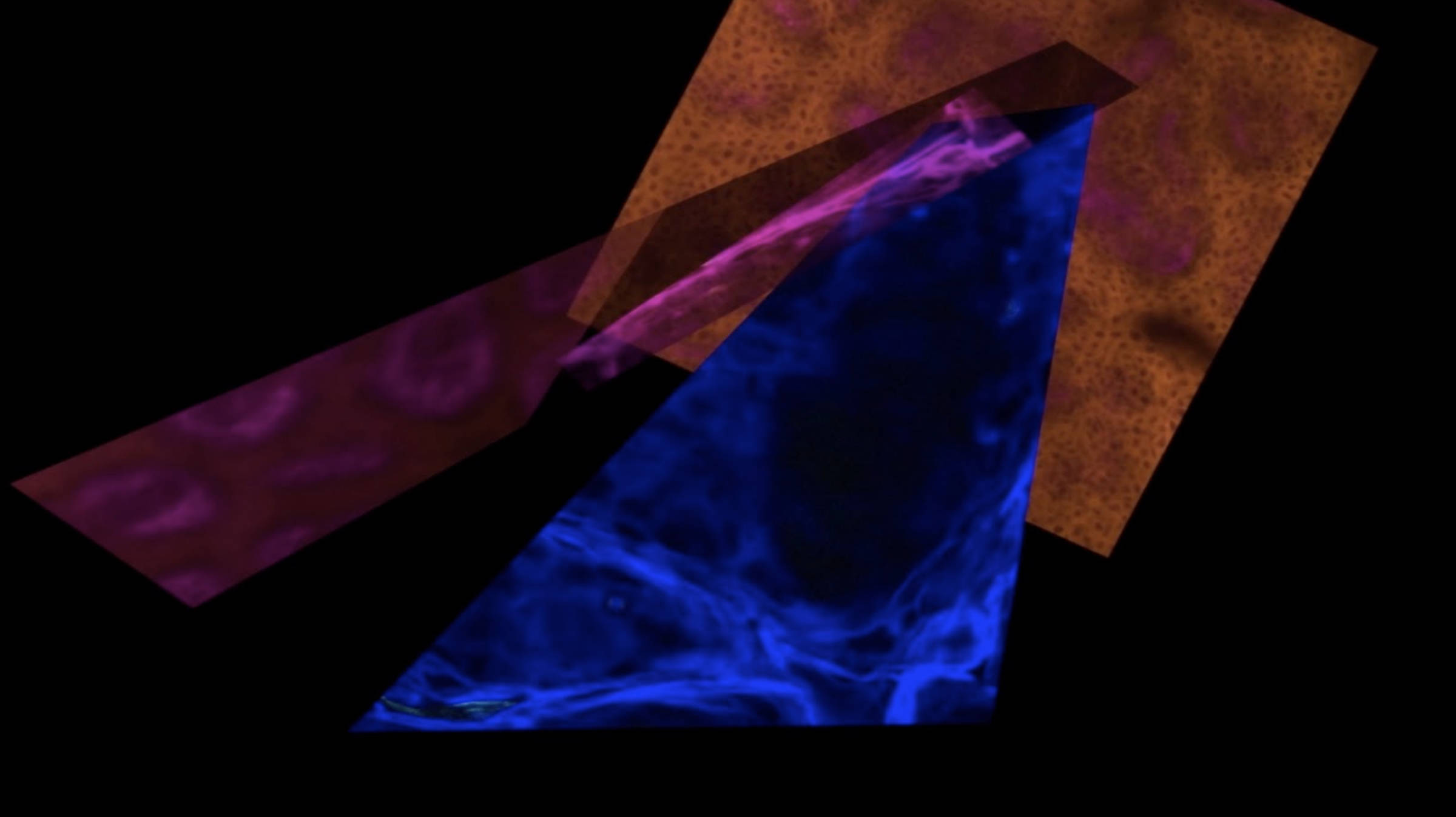

SKIN is an electroacoustic fixed media composition, with visual art by BCU lecturer and artist Jo Berry. Much of Jo Berry’s work involves the creation of abstract digital art based on images derived from medical microscopy. In composing this piece, my main objective was to develop a musical framework that might mirror and compliment Jo Berry’s processes.

When considering a musical response to abstract visual art derived from a human source, I was drawn to Trevor Wishart’s discussion of sonic metaphor, exemplified by works such as Red Bird (1977). In Red Bird, vocal material is frequently transformed into non-human sounds, such as bird song or gunfire. In my doctoral work, I explored some of these ideas in relation to untranslated early medieval poetry, by transforming the electroacoustic voice into sounds reminiscent of the imagery described in a particular poem. In SKIN, I took this process one stage further, by shortening or transforming the Old English source material so that most of the semantic content disappeared. My aim was to create a continuum that ranged from material in which the human origin was clearly evident (in the form of words and vocal timbre), to sustained sounds and drone-like sonorities based on upper partials of the singing voice. In this way, I intended to mirror and compliment Jo Berry’s artwork in which images varied from purely abstract colours and textures to clearly identifiable charts, graphs and scans.

This work was first presented at the Research Centre for Bio Interfaces, University of Malmö, in October 2018.

EDMUND HUNT

Singing Litter (3)

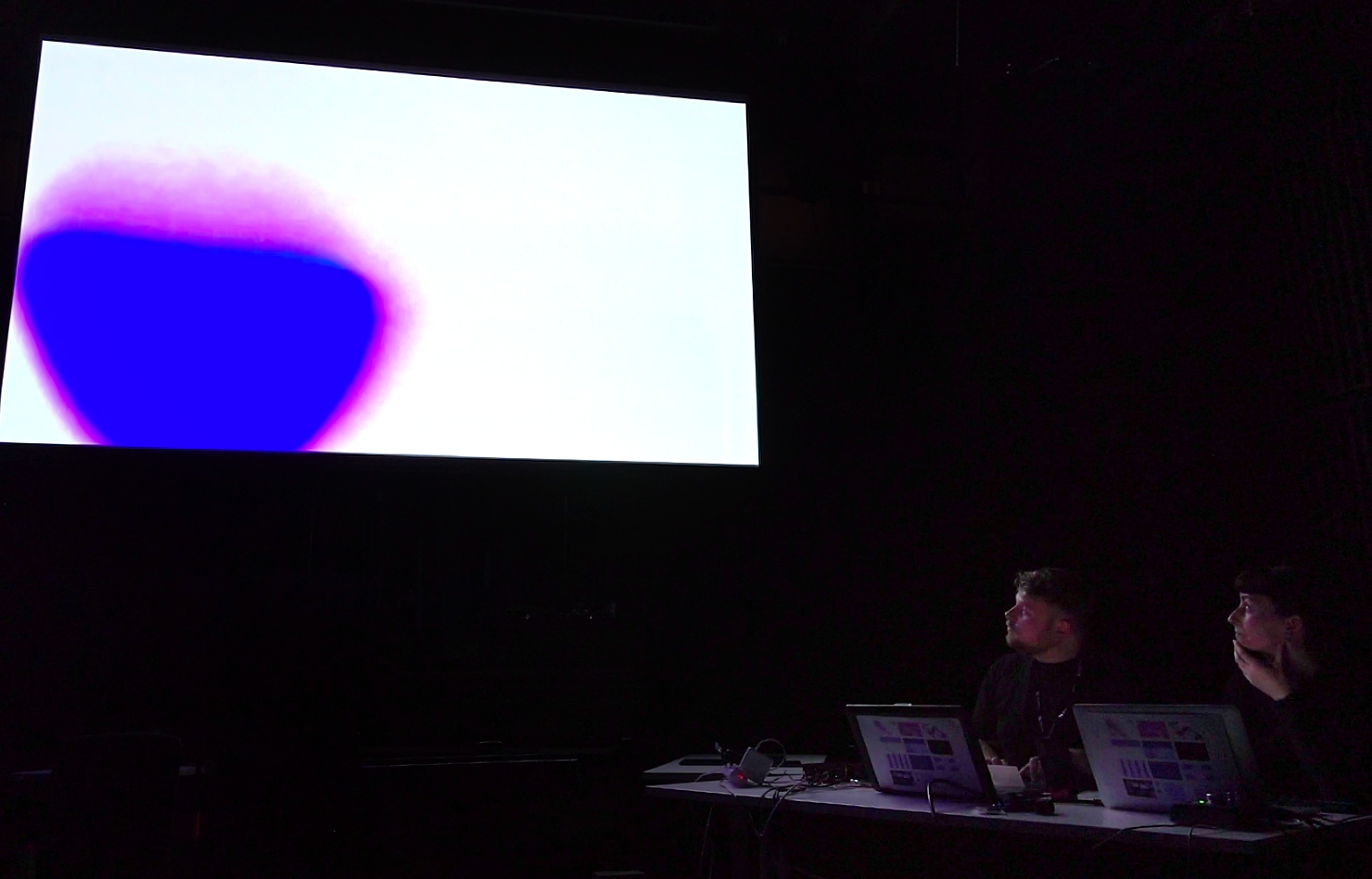

Singing Litter is an audiovisual performance by Matthew DF Evans and Carol Breen which explores pixel data sonification. Data from imagery is used as the catalyst for the creation of sound exploring the image as a sonic stimulant. The performance explores the aesthetics of analog, digital and post-digital imagery.

As part of the Integra Lab showcase the third iteration of Singing Litter was debuted, using a bespoke pixel data sonification system built in Max. In previous iterations Carol’s images were used as a graphic score to inspire sound, however this new system takes a webcam feed of projected images and through the analysis of RGB data, sounds are synthesised in direct response to the changing visuals. The primary objective of this performance is to create an integrated audiovisual performance that allows the audience to see and hear a direct correlation between the changing imagery and resulting sonic output.

MATTHEW DF EVANS

Formuls Meets Feedback

This was the first public-facing performance of a new collaboration between James Dooley and I. The performance itself had emerged from exploratory sessions in which we sought to combine aspects of our individual practices: James’ development of his Formuls system, and my ongoing use of feedback systems.

We found commonalities and differences in the approaches and tools we brought to these initial sessions. Both of us improvise in our performances, and do so with a keen interest in sound, but while James’ Formuls system provides a very high degree of control over temporal/timbral aspects of synthesised sounds, my own work has instead focused on interactions with chaotic systems – where sounds can be interacted with, but not fully controlled. Through these early sessions, we came to an interesting point of departure for the performance, one that embraced the themes of control and contrast emerging from our individual practices.

Prior to the performance, we constructed a co-dependent network of tools for improvisation; at its core, Formuls would generate and feed sound into two mixing desks, which could route sound to an array of speakers and transducers. These were then used to excite a variety of resonant objects, which in turn were amplified through the venue’s PA system, giving voice to the hybridised sound of formuls, objects and acoustic feedback.

The configuration of this system gave each of us the potential to dominate or disrupt the composite musical output by either overloading or cutting the sound in our own equipment. We could also operate on a more cooperative basis, by crafting sounds and structures through a balancing of various sonic, physical and digital elements in the system. It was interesting to see how the combination of Formuls with objects and feedback changed the nature of control over sound that each of us typically expect when performing individually. The interactions of feedback and physical objects at times subverted the precise sonic/rhythmic control afforded by Formuls, while in other contexts, the structured output of Formuls would cause the interactions of objects and feedback to become far more predictable and less chaotic.

The system design in this performance had provided an exciting platform for collaborative improvisation, changing and challenging our habitual relationships with our own equipment and with each other as co-dependent performers of a hybrid musical system. It has been a fruitful starting point for the project, with plenty of scope for further exploration.

JOE WRIGHT

To find out about future events involving Integra Lab, or the Royal Birmingham Conservatoire, you can visit the RBC’s events page.